Featured Article

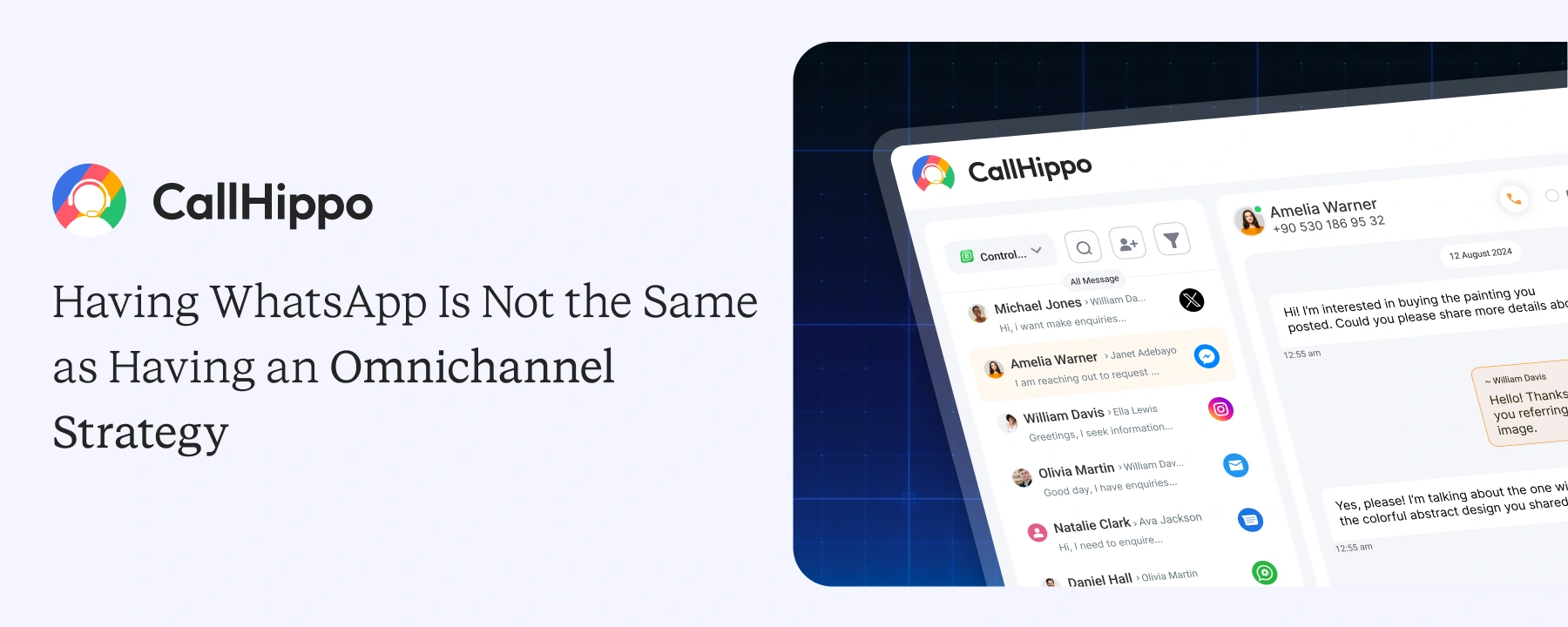

Why Businesses Need More Than WhatsApp for Omnichannel Communication

WhatsApp Business is just one channel. Your customers also call, text, email, and message across many channels every day. Treating one channel as a complete strategy leaves the rest of the customer experience exposed. The gap between...

AI Cold Calling: How It Works, What It Delivers, and How to Set It Up

How to Get a Second Phone Number (3 Real Methods That Work)

Best VoIP Providers for Business in 2026: Tested and Ranked

- Small Business

- VoIP

- Call Center

- Customer Service

- How to Call

- AI

Set Up Your Phone System In Less

Than 3 Minutes

From buying a number to making the first call, all it takes is 3 minutes to set

up your virtual phone system.

- 1Buy Numbers

- 2Add Users

- 3Start Calling

- 4Track Calls